Top 6 Security Challenges of AI Voice Technology in 2026

.jpg)

Don’t miss what’s next in AI.

Subscribe for product updates, experiments, & success stories from the Nurix team.

Every voice command holds more than just instructions; it can reveal financial details, passwords, or identity cues that, in the wrong hands, snowball into risk. As rapid adoption of voice-driven AI takes off, the multifaceted security challenges of voice AI have taken center stage for anyone handling sensitive conversations over the phone or smart devices. These aren’t hypothetical threats; real-world attacks target speech recognition models, hijack authentication with deepfakes, and even extract private data from what users say out loud.

The global Artificial Intelligence (AI) Voice Interaction Service market is heading for steady growth, projected at a 6.5% CAGR from 2025 to 2033. This uptick is fueled by the demand for voice-first customer service, automated workflows, and accessibility. Yet, as deployments rise, the multifaceted security challenges of voice AI deserve sharper focus, not just from IT teams, but from anyone relying on voice tech for daily business.

This blog focuses on the most important voice AI security risks, from deepfake impersonation and unintended recording to adversarial audio and workflow manipulation.

Executive Summary (2026): Voice AI creates new security risks across authentication, data privacy, model integrity, and workflow execution. The biggest threats include voice cloning, inaudible command injection, unintended recording, adversarial audio, and prompt manipulation. Reducing risk requires layered controls such as grounded workflows, access controls, auditability, on-device processing where possible, and continuous monitoring.

Key Takeaways:

- Understanding AI Voice Technology: AI Voice Technology enables machines to understand and respond to spoken language, powering a wide range of applications from virtual assistants to IoT devices.

- Why Businesses Choose Voice AI: Businesses embrace AI voice for operational gains like cost savings and efficiency, but these benefits come paired with specific and evolving security risks unique to voice interactions.

- Invisible Audio Threats: Invisible threats, like inaudible commands and voice deepfakes, pose significant dangers by tricking systems into unauthorized actions, bypassing traditional security checks.

- Privacy Risks from Unintended Recordings: Privacy risks arise from unintended recordings and data leaks, as voice devices may capture more than intended, complicating compliance and exposing sensitive information.

- Strategies to Manage Voice AI Security: Managing these multifaceted security challenges of voice AI demands continuous monitoring, solid compliance practices, user awareness, and secure vendor partnerships to reduce vulnerabilities while maintaining usability.

What is AI Voice Technology?

AI voice technology refers to the set of digital systems that convert spoken language into digital signals and back, allowing machines to process, interpret, generate, and respond to human speech.

These systems rely on advanced machine learning and deep learning models designed to recognize voice input, interpret intent, and create natural-sounding verbal output. This technology now powers everything from customer service platforms to virtual assistants and voice-controlled IoT devices, transforming how people interact with digital systems.

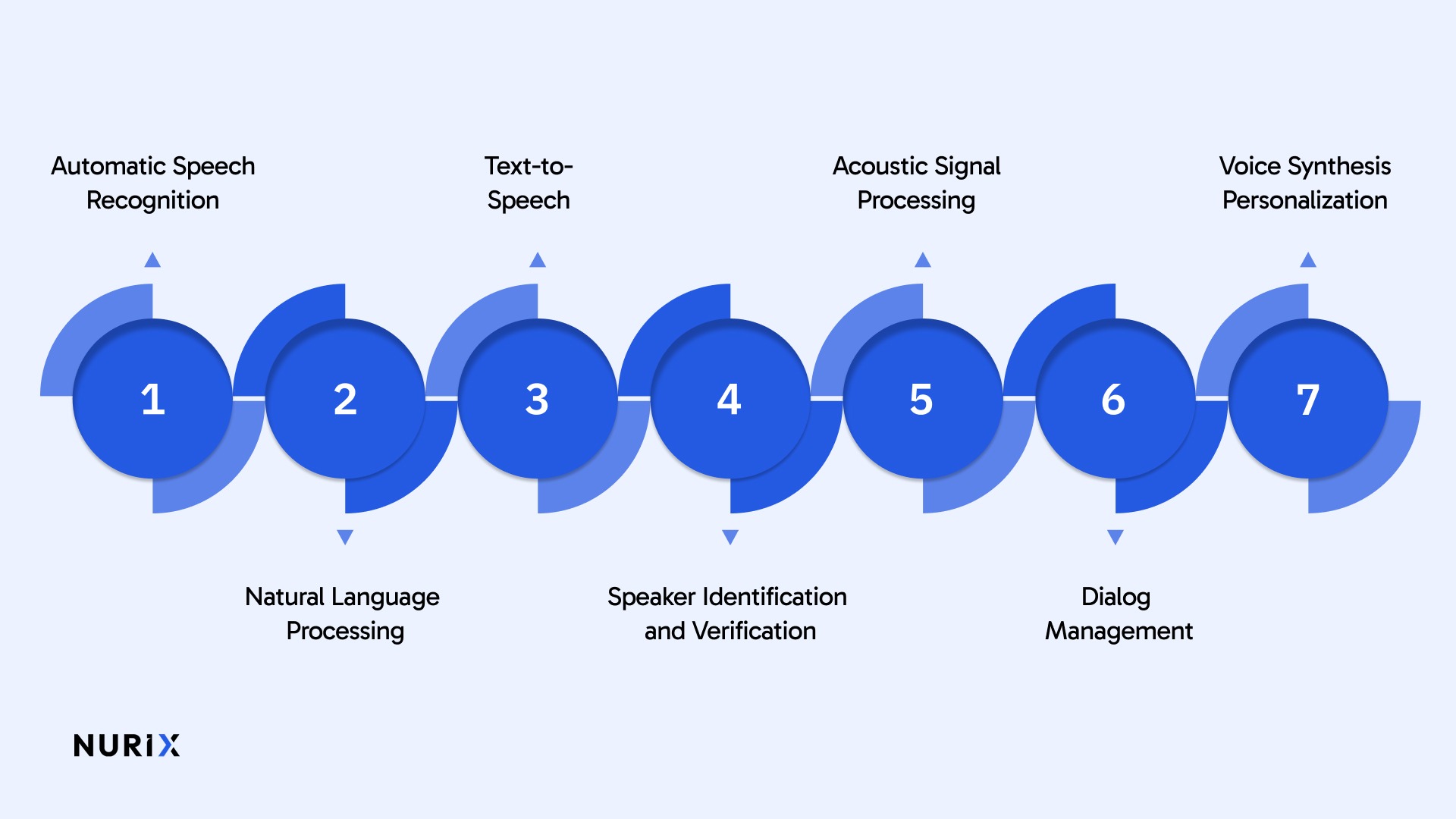

Key Components of AI Voice Technology:

When you’re weighing the multifaceted security challenges of voice AI, what matters most is not just what powers these systems, but where the cracks can form. Below, each component reveals how the promise of voice AI and its security gaps are often intertwined for anyone relying on real-world deployments.

- Automatic Speech Recognition (ASR): Converts spoken words into text data. This process involves complex acoustic modeling and language modeling to account for variations in accents, noise, and vocabulary, ensuring that verbal input is accurately transcribed.

- Natural Language Processing (NLP): Interprets the meaning and intent behind the spoken or transcribed words. NLP enables systems to parse context, sentiment, and command structure, resulting in responses that are contextually relevant.

- Text-to-Speech (TTS): Generates lifelike speech from text, allowing systems to respond verbally. Modern TTS solutions use neural networks to replicate tone, prosody, and inflections, resulting in speech output that feels natural and engaging.

- Speaker Identification and Verification: Determines who is speaking, using voice biometrics for security or personalization. These systems analyze distinct vocal characteristics for activities like secure authentication or personalized experiences.

- Acoustic Signal Processing: Filters out background noise and improves audio signals to improve system accuracy in real-world usage settings, such as crowded spaces or over mobile devices.

- Dialog Management: Maintains context and flow within conversations, enabling fluid multi-turn interactions and ensuring the system responds appropriately as the exchange progresses.

- Voice Synthesis Personalization: Adjusts vocal output for brand voice or individual preferences, supporting unique conversational identities and custom user experiences.

Voice AI’s complex components bring both opportunity and risk. Understanding these trade-offs makes the business case clearer, especially with the multifaceted security challenges of voice AI in mind. Here’s what’s driving investment.

Why are Businesses Investing in AI Voice Technology?

Investing in AI voice technology means engaging with a tool that opens both operational advantages and new security considerations. Given the multifaceted security challenges of voice AI, understanding why these investments persist reveals where value and risk intersect in real deployments.

- Automated Claims Processing in Insurance: Nationwide Insurance and others have adopted voice-driven claims submission over the phone, allowing policyholders to start a claim, provide details, and get status updates, reducing the need for agent intervention and speeding up resolutions for events like auto accidents.

- Outbound Collections and Payment Reminders in Banking: Major banks use AI voice agents for routine collection calls and payment reminders, allowing them to handle high-volume outreach without relying entirely on human agents for every interaction.

- Order Tracking and Delivery Scheduling in Logistics: FedEx and UPS have deployed voice-enabled systems that allow customers to reschedule deliveries, check package status, or report issues by phone, driving down contact center loads during peak seasons.

- Telehealth Intake and Patient Triage in Healthcare: Healthcare providers use AI voice assistant systems to handle appointment scheduling, prescription refills, and post-visit surveys, which reduces administrative bottlenecks, keeps nurses focused on care, and meets compliance requirements for accessibility.

- Smart IVR in Retail Customer Service: Retail chains like Walmart and Best Buy use AI-driven phone menus that recognize natural speech, route calls accurately, process returns, and handle stock checks, improving first-call resolution rates and reducing staff turnover caused by repetitive work.

- Compliance-Driven Call Monitoring: Financial services firms use voice analytics to audit all recorded calls for regulatory keywords or legal disclosures, flagging non-compliant interactions for human review and thereby reducing audit costs.

- Hands-Free Equipment Control for Field Technicians: Utilities and telecoms outfit workers with wearable devices powered by voice AI, enabling technicians to retrieve manuals, log tasks, or call for help while keeping both hands free, improving job safety and reducing human error.

Investment in AI voice technology comes with clear benefits, but it also brings exposure to unique and evolving risks. With the multifaceted security challenges of voice AI right at the intersection of opportunity and vulnerability, it's critical to recognize which threats deserve priority attention. Here’s a closer look at those key security challenges.

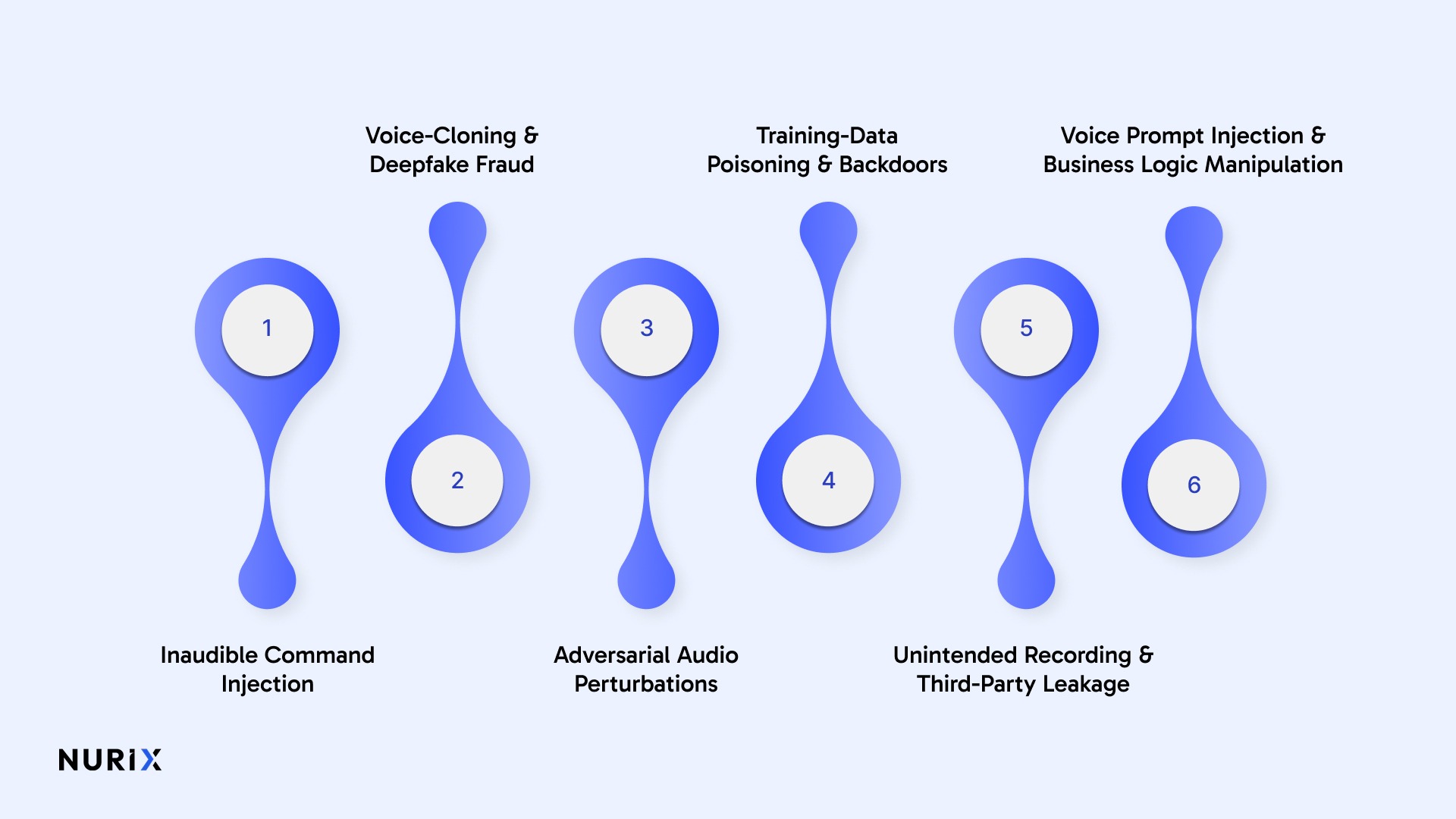

Top Multifaceted Security Challenges of Voice AI

When considering the multifaceted security challenges of voice AI, it’s clear that risks often stem not from a single flaw, but from how various vulnerabilities intersect within real-world use. Understanding these nuanced threats helps pinpoint where security efforts need the most focus.

1. Inaudible Command Injection (Ultrasonic & “Dolphin” Attacks)

Ultrasonic carriers hide spoken commands above 20 kHz, yet the microphone non-linearity demodulates them. Silent clips embedded in ads, TV audio, or Zoom calls can unlock doors or place orders; proof-of-concepts succeeded from 25 ft with 0.77-second payloads.

Key details

- Delivery path: Smart-TV speakers, web videos, or meeting audio can embed near-ultrasound triggers that nearby phones obey.

- Range & scale: Lab arrays pushed silent commands across rooms, controlling Siri, Alexa, and car systems from 25 ft.

How the concern is addressed:

- Frequency guards: On-device filters drop everything above the human hearing band, blocking the carrier.

- Command gating: Assistants require a spoken passphrase or PIN before acting on high-risk requests.

- Anomaly sensing: Classifiers flag ultrasound energy patterns with 97% precision in real time.

2. Voice-Cloning & Deepfake Fraud

Generative models replicate a person’s voice from a three-second sample, enabling scammers to request wire transfers or MFA codes; cases include a $25 m payment and a USD 2.54 k theft.

Key details

- Attackers can build convincing synthetic voices from short public or captured audio samples.

- Voice-based trust signals are easier to exploit when teams rely on urgency, familiarity, or weak verification steps.

- Organizations using voice biometrics or spoken approvals should review whether those controls remain strong against synthetic speech.

How the concern is addressed:

- Use out-of-band verification for high-risk requests such as fund movement, credential resets, or account changes.

- Strengthen identity checks beyond voice alone, especially in sensitive workflows.

- Add fraud monitoring and synthetic-speech detection where voice is part of the authentication or approval path.

3. Adversarial Audio Perturbations

Imperceptible noise (<0.2% amplitude) forces ASR to mis-transcribe or execute rogue commands; music was rewritten into “OK Google, browse to evil.com” while sounding unchanged to humans. One-query black-box attacks now achieve the same mischief.

Key details

- Covert modification: Sub-dB perturbations redirect the transcript without alerting listeners.

- Fast generation: ALIF crafts a successful sample with a single query, cutting attack cost by 97.7%.

- Dual use: Malicious transcripts can inject SQL-like strings into logs or siphon data via call recordings.

How the concern is addressed:

- Input sanitization: Front-end denoisers strip low-energy perturbations before recognition.

- Layered logging: Device audio and server transcript are compared; mismatches trigger audits.

4. Training-Data Poisoning & Backdoors

Attackers slip mislabeled or malicious clips into corpora or federated updates; as little as 0.17% tainted audio can force chosen transcriptions while quality metrics stay green.

Key details

- Insider route: Swapping half of a victim’s enrollment clips with attacker audio lets both voices pass authentication at 95% accuracy.

- Federated blind spot: Client-side learning hides raw data, easing silent backdoor insertion.

How the concern is addressed

- Guardian filter: A secondary CNN screens embeddings and spots poisoned clips with 95%+ accuracy.

- Differential isolation: Crowd-sourced updates run in shadow models for validation before merge.

- Dataset provenance: Cryptographic hashes and signed manifests bind every clip to a verified contributor.

5. Unintended Recording & Third-Party Leakage

Voice systems can create privacy risk when they capture more audio than users expect, retain recordings longer than necessary, or expose voice data to broader internal or third-party access than customers realize. U.S. regulators have already taken action against Amazon over allegations involving retention and deletion practices tied to Alexa voice recordings, showing that voice-data governance is not a hypothetical concern.

Key details

- False activations and always-listening designs can increase the risk of collecting unintended speech.

- Voice recordings and transcripts may contain personal, financial, health, or behavioral signals that raise privacy and compliance concerns.

- Governance failures around retention, deletion, access, and vendor handling can create legal and reputational exposure.

How the concern is addressed:

- Minimize collection and retention of raw audio wherever possible.

- Restrict access to recordings, transcripts, and derived data through role-based controls and auditability.

- Define clear deletion, retention, and consent practices for voice data across vendors and internal teams.

6. Voice Prompt Injection & Business Logic Manipulation

Attackers weave malicious sentences across multi-turn conversations to expose system prompts or override policy. Because abuse happens live, fraudulent payments or data leaks occur before alarms fire.

Key details

- Conversation bleed: Skilled phrasing convinces the assistant to reveal internal instructions word-for-word.

- Real-time exposure: Exploits delivered during customer calls can change account data instantly.

- Multi-vector synergy: Attackers blend prompt injection with ultrasound or voice-clone spoofing to evade logs.

How the concern is addressed:

- Context memory checks: Each turn is re-validated against a signed system prompt; deviations are rejected.

- Transfer caps: Large transactions require MFA outside the voice channel, halting automated fraud.

- Red-team rehearsal: Security teams stage prompt-injection drills to fine-tune defense playbooks.

Spotting the multifaceted security challenges of voice AI is only the start; making smart choices during rollout is where the real work happens. Below are practical steps that matter when getting voice AI into the field.

Implementation Considerations and Best Practices

Managing the multifaceted security challenges of voice AI requires more than awareness. It requires technical controls, operational discipline, and clear governance over how voice systems are deployed, monitored, and allowed to act.

The most practical safeguards include the following:

1. Use layered verification for high-risk actions

Voice alone should not authorize sensitive transactions, account changes, or privileged actions. For high-risk workflows, teams should use out-of-band verification, secondary approval steps, or human review before execution.

2. Limit access and define action boundaries

Voice agents should only have access to the systems, data, and actions they actually need. Least-privilege access reduces the blast radius if a workflow is manipulated or misused.

3. Use on-device processing where possible

For some use cases, on-device speech processing can reduce privacy exposure by limiting how much raw audio is transmitted or stored in external environments. This is especially relevant when sensitive information may be spoken about during interactions.

4. Control data retention and storage practices

Voice interactions can contain personal, financial, and operationally sensitive information. Organizations should define how long audio, transcripts, and metadata are retained, who can access them, and when they should be deleted.

5. Test for adversarial and synthetic voice threats

Voice AI systems should be evaluated against spoofing, deepfake impersonation, adversarial audio, and prompt manipulation. Security testing should include both model-level and workflow-level attack scenarios.

6. Monitor continuously and investigate anomalies

Security teams should monitor for unusual conversation patterns, policy deviations, sudden spikes in failure or escalation, and other signals that may indicate misuse or system drift. Visibility is essential once voice AI is live.

7. Evaluate vendors for governance, not just features

A voice AI platform should be assessed on auditability, access controls, deployment flexibility, security practices, incident handling, and compliance readiness — not only on latency or conversation quality.

8. Keep humans in the loop where the stakes are high

When the workflow affects payments, compliance decisions, sensitive records, or identity verification, human oversight remains essential. Voice AI should accelerate operations without removing accountability.

Strong voice AI security depends on how systems are deployed, connected, and controlled in production. The safest organizations are the ones that treat security as part of the operating model, not as a patch added later.

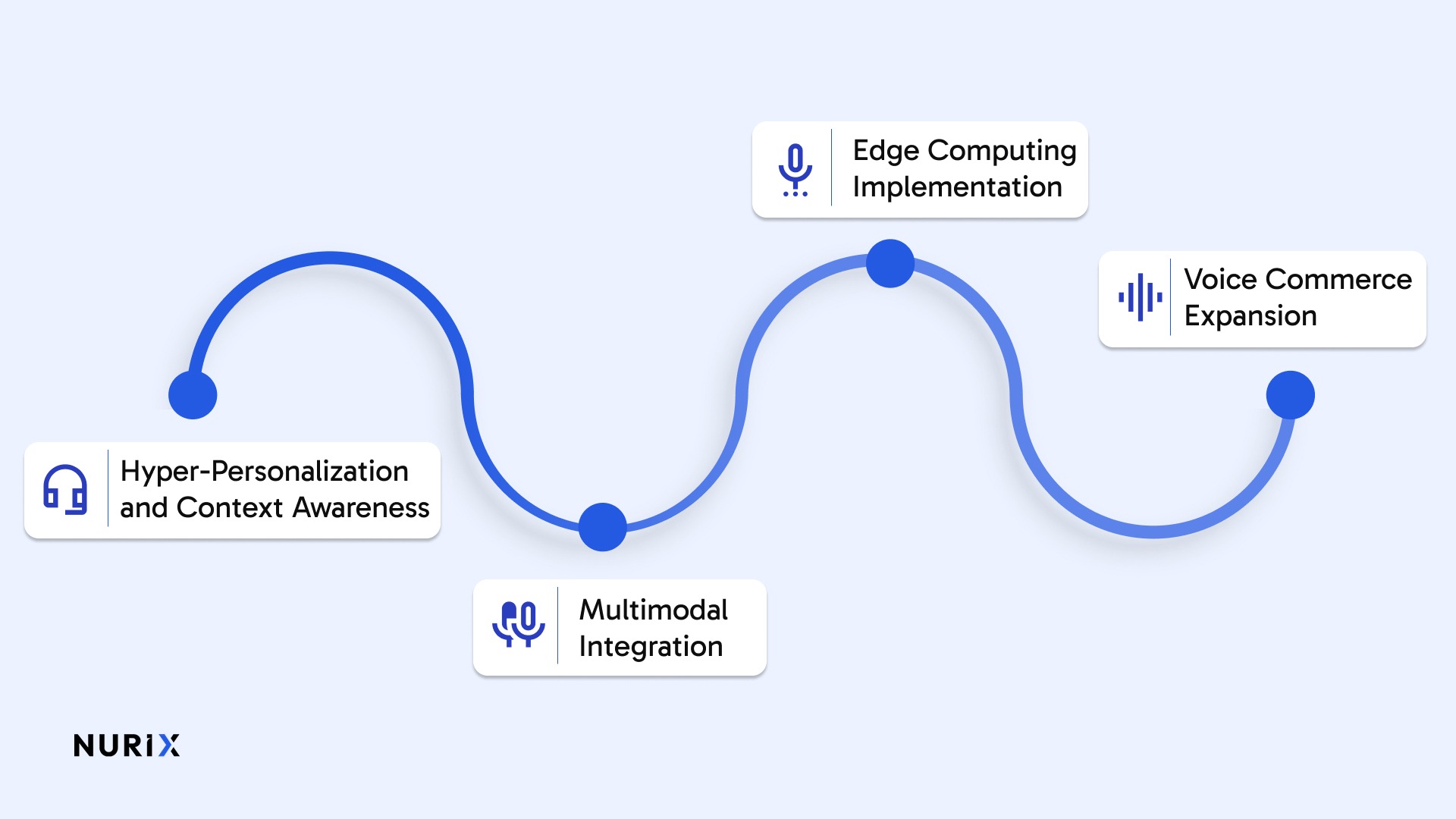

Future of Voice Technology

Looking ahead, the future of voice technology will hinge on how well it balances expanding capabilities with addressing the multifaceted security challenges of voice AI. Here’s a closer look at where the next wave of voice tech is headed and the critical factors that will shape its trajectory.

- Hyper-Personalization and Context Awareness: Voice AI systems are evolving to provide individualized experiences based on user preferences, historical interactions, and contextual understanding. This personalization extends beyond simple command recognition to emotional intelligence and adaptive conversation flows.

- Multimodal Integration: Future voice interfaces will combine speech with visual elements, gesture recognition, and tactile feedback, creating more natural and intuitive user experiences across diverse environments.

- Edge Computing Implementation: To address latency and privacy concerns, organizations are moving voice processing capabilities closer to end users through edge computing architectures. This approach reduces dependency on cloud services while improving response times.

- Voice Commerce Expansion: E-commerce platforms are integrating voice technology for product searches, purchase transactions, and customer support. Voice commerce is projected to generate $11.2 billion in retail sales by 2026.

How NuPlay By Nurix AI Helps Businesses Deploy Voice AI More Securely

NuPlay is an enterprise AI voice and chat platform by Nurix AI that helps organizations deploy voice AI with orchestration, integrations, observability, and enterprise-grade security built into the workflow layer. For teams operating in regulated or high-risk environments, the goal is not just to make voice AI work; it is to make it controllable, auditable, and safer to run in production.

How NuPlay Helps:

- Enterprise governance and compliance controls

NuPlay supports enterprise-grade governance with role-based access, auditability, configurable data retention, and controls designed to help teams operate more safely in regulated environments. - Workflow execution with system-level control

NuPlay connects voice agents to CRM, ERP, helpdesk, and other operational systems so teams can define how actions are triggered, where approvals are needed, and when human escalation should take over. - Grounded responses through knowledge-connected workflows

With retrieval-friendly workflows and connected enterprise data, NuPlay helps reduce unsafe or low-confidence responses by keeping interactions tied to approved business context instead of relying only on raw model output. - Real-time visibility through NuPulse

NuPlay gives teams access to conversation logs, summaries, performance signals, and operational analytics through NuPulse, helping them detect friction, monitor outcomes, and investigate issues more effectively. - Multi-agent orchestration for controlled complexity

Instead of relying on a single voice flow, NuPlay supports multi-agent orchestration so organizations can manage branching logic, approvals, routing, and high-risk steps with more structure and oversight. - Human-like interaction with operational safeguards

NuPlay supports low-latency, human-like voice interactions while still giving organizations the governance and workflow controls needed to balance usability with security.

Together, these capabilities help organizations move from experimental voice AI to production-ready deployments with stronger control over privacy, decision flow, and operational risk.

Final Thoughts!

Voice AI security should be treated as a deployment and governance priority, not just a technical afterthought. As voice systems take on more sensitive workflows, organizations need stronger controls around authentication, data handling, model behavior, and real-time decision execution.

The safest approach is to combine layered security practices with platforms that provide visibility, workflow control, and enterprise-grade governance. That is where NuPlay by Nurix AI fits. NuPlay helps organizations deploy voice AI with real-time voice and chat workflows, connected enterprise systems, auditability, observability through NuPulse, and the controls needed to manage risk more effectively in production environments.

Get in touch with us to see how NuPlay helps teams deploy voice AI with stronger operational control, better visibility, and more secure workflow execution.

Unintentional sharing of confidential details during voice interactions can be recorded and stored, increasing the risk of sensitive information exposure.

These attacks use subtle audio modifications that are often inaudible to humans but can mislead voice recognition or authentication systems to behave incorrectly.

Yes, through model inversion techniques, attackers probe AI systems to reconstruct or infer sensitive training data and speaker identities.

Voiceprints can be cloned or mimicked to bypass authentication, making deepfake voice attacks a significant threat to voice-based security.

Voice interactions can contain sensitive personal, financial, or operational information. If recordings or transcripts are retained too long, accessed too broadly, or handled without clear governance, they can create privacy, legal, and trust risks. Organizations should define strict policies for collection, retention, deletion, access, and vendor handling of voice data.

Don’t miss what’s next in AI.

Subscribe for product updates, experiments, & success stories from the Nurix team.