In 2026, large language models (LLMs) power everything from AI agents to real-time analytics. With rapid advancements in reasoning, multimodality, and real-time data integration, the question becomes more relevant than ever: which LLM is the most advanced today?

This guide compares top contenders such as GPT-5.5, Claude Opus 4.7, Gemini 3.1 Pro, DeepSeek-V4 Preview, and others to help you understand what “advanced” really means and choose the right model for your needs.

Executive Summary: In 2026, leading large language models differ by reasoning depth, multimodal support, tool use, deployment control, and enterprise readiness. GPT-5.5, Claude Opus 4.7, Gemini 3.1 Pro, DeepSeek-V4 Preview, Llama 4 Scout and Maverick, Mistral Large 3, and Command A each fit different needs. Choose based on use case, integrations, data control, performance, and cost.

At a glance:

- The leading large language models in 2026 differ by reasoning depth, multimodal capability, tool use, deployment control, and enterprise readiness.

- GPT-5.5, Claude Opus 4.7, and Gemini 3.1 Pro are strong choices for complex reasoning, coding, research, and enterprise knowledge workflows.

- DeepSeek-V4 Preview stands out for long-context, reasoning-heavy, and agent-focused workflows where cost efficiency and structured problem-solving matter.

- Llama 4 Scout, Llama 4 Maverick, and Mistral Large 3 give teams more deployment flexibility through open-weight models that support customization and infrastructure control.

- NuPlay by Nurix AI applies advanced large language models inside voice and chat workflows, helping enterprises automate lead qualification, support, and workflow execution.

What Is an LLM (Large Language Model) and Why Does It Matter in 2026?

Large Language Models are AI systems that understand, generate, and process human language at scale. They serve as intelligent systems capable of answering queries, generating content, analyzing documents, and supporting real-time decision-making.

In 2026, LLMs are no longer experimental tools. They are becoming core infrastructure for sales, support, and operations across enterprises.

The core architecture of LLMs includes:

.jpg)

- Foundation Models: LLMs are trained on large, diverse datasets such as web pages, books, and code. This allows them to power tasks like automated support, document analysis, and knowledge retrieval at scale.

- Transformer Architecture: Most LLMs use transformer models with self-attention mechanisms. This enables better context understanding, leading to more accurate responses and fewer irrelevant outputs in real-world applications.

- Scale: Modern LLMs range from millions to trillions of parameters. This scale improves reasoning, language understanding, and the ability to handle complex workflows across industries.

- Multimodal Capabilities: Many LLMs now process text, images, audio, and video. This allows businesses to automate tasks like customer interactions, document processing, and real-time insights across multiple formats.

- Enterprise Integration: LLMs are increasingly integrated with CRM systems, support tools, and internal platforms. This helps reduce manual effort, improve response times, and maintain consistency across workflows.

As we move forward, you'll explore how LLMs function behind the scenes and what makes some of these models open-source.

This might be the insight you’re looking for: The Future of Work: Integrating Human Intelligence with AI

Experience smarter automation with NuPlay by Nurix AI’s LLM-driven AI agents! Improve communication, scale your support, and deliver personalized responses that adapt to your business needs.

Which LLM Is the Most Advanced Today? Top Models Compared (2026)

Curious about which LLM is the most advanced today? In 2026, several models lead the space, each excelling in different areas such as reasoning, multimodal capabilities, and enterprise use.

Here are the most advanced large language models shaping AI today:

1. GPT-5.5 by OpenAI

OpenAI’s GPT-5.5 is its newest advanced model built for complex professional work. It’s designed to handle coding, research, analysis, document-heavy tasks, and multi-step workflows.

Compared with GPT-5.4 Pro in early testing, it offers stronger reasoning, better tool use, and improved performance across technical and knowledge-based tasks.

Key Features:

- Stronger reasoning and tool use: Manages complex, multi-step tasks across coding, research, and analysis. It understands tasks more clearly and executes them more reliably.

- High coding performance: Excels at software engineering tasks, including debugging, testing, refactoring, and working with large codebases.

- Support for professional workflows: Handles document-heavy projects, research tasks, information synthesis, and business analysis, making it suitable for enterprise teams working on detailed knowledge tasks.

2. Claude Opus 4.7 by Anthropic

Claude Opus 4.7 is Anthropic’s most capable generally available model. It’s built for complex reasoning, coding, knowledge work, vision tasks, and long-context workflows. It works well for enterprise use cases that require reliable analysis and structured outputs.

Key Features:

- Long-context processing: Handles large documents and extended workflows. This makes it useful for research, compliance reviews, and other knowledge-heavy tasks.

- Strong reasoning and coding support: Manages multi-step logic, structured analysis, and complex coding tasks with improved consistency.

- Flexible enterprise deployment: Available through the Claude API, Amazon Bedrock, Vertex AI, and Microsoft Foundry, giving teams multiple options for deployment.

3. Gemini 3.1 Pro by Google DeepMind

Gemini 3.1 Pro is Google DeepMind’s advanced reasoning model for complex tasks across coding, research, analysis, and multimodal workflows.

It supports long-context work and precise tool use, making it useful for teams building AI workflows within Google’s ecosystem.

Key Features:

- Improved reasoning and reliability: Provides stronger reasoning, factual consistency, and more dependable outputs for complex tasks.

- Agentic workflow support: Supports tool-heavy workflows that require careful execution across multiple steps.

- Built for the Google ecosystem: Works through Google AI Studio, the Gemini API, and Vertex AI, making it a good fit for teams already using Google’s AI environment.

This is definitely worth a look: Throughput Is All You Need

4. DeepSeek-V4 Preview

DeepSeek-V4 Preview is DeepSeek’s latest open-source preview model series, available in Pro and Flash versions. It supports a 1-million-token context window, making it useful for long-context reasoning, coding, research, and agent workflows.

Since it is still a preview release, teams should evaluate it carefully before using it in production-critical workflows.

Key Features:

- 1 million token context window: Supports very large inputs, making it useful for long documents, research tasks, and complex multi-step workflows.

- Pro and Flash model options: DeepSeek-V4-Pro is designed for higher performance, while DeepSeek-V4-Flash supports faster, more cost-effective use cases.

- Open-source availability: Gives teams more flexibility to test, customize, and deploy the model across different environments.

5. Llama 4 Scout and Llama 4 Maverick by Meta

Llama 4 is Meta’s open-weight model family, led by Llama 4 Scout and Llama 4 Maverick. These models are designed for teams that want greater flexibility in deploying and customizing AI systems.

They support native multimodal tasks and long-context workflows, making them useful for enterprise teams working with documents, visuals, research, and knowledge-heavy processes.

Meta describes Llama 4 Scout and Maverick as open-weight, natively multimodal models with long-context support.

Key Features:

- Open-weight deployment flexibility: Teams have more control over how the models are deployed, hosted, and customized for internal systems and workflows.

- Native multimodal capability: The models handle text and image inputs, making them suitable for document review, visual analysis, and workflows that combine different data types.

- Long-context support: Llama 4 Scout and Maverick are designed to process larger inputs, helping teams manage extended research, analysis, and knowledge-heavy tasks.

This might be just what you need next: AI Voice Interaction Solutions

6. Mistral Large 3 by Mistral AI

Mistral Large 3 is Mistral AI’s flagship open-weight model built for enterprise and production use. It supports multilingual, multimodal, and agentic workflows, delivering strong performance while giving teams greater deployment control.

Key Features:

- Open-weight enterprise model: Allows teams to customize, fine-tune, and deploy the model across different production environments with more flexibility.

- Multilingual and multimodal support: Works across multiple languages and can process both text and visual inputs. This makes it suitable for global operations, research tasks, and content workflows.

- Agentic workflow capability: Supports advanced workflows where the model needs to reason, use tools, and assist with multi-step tasks.

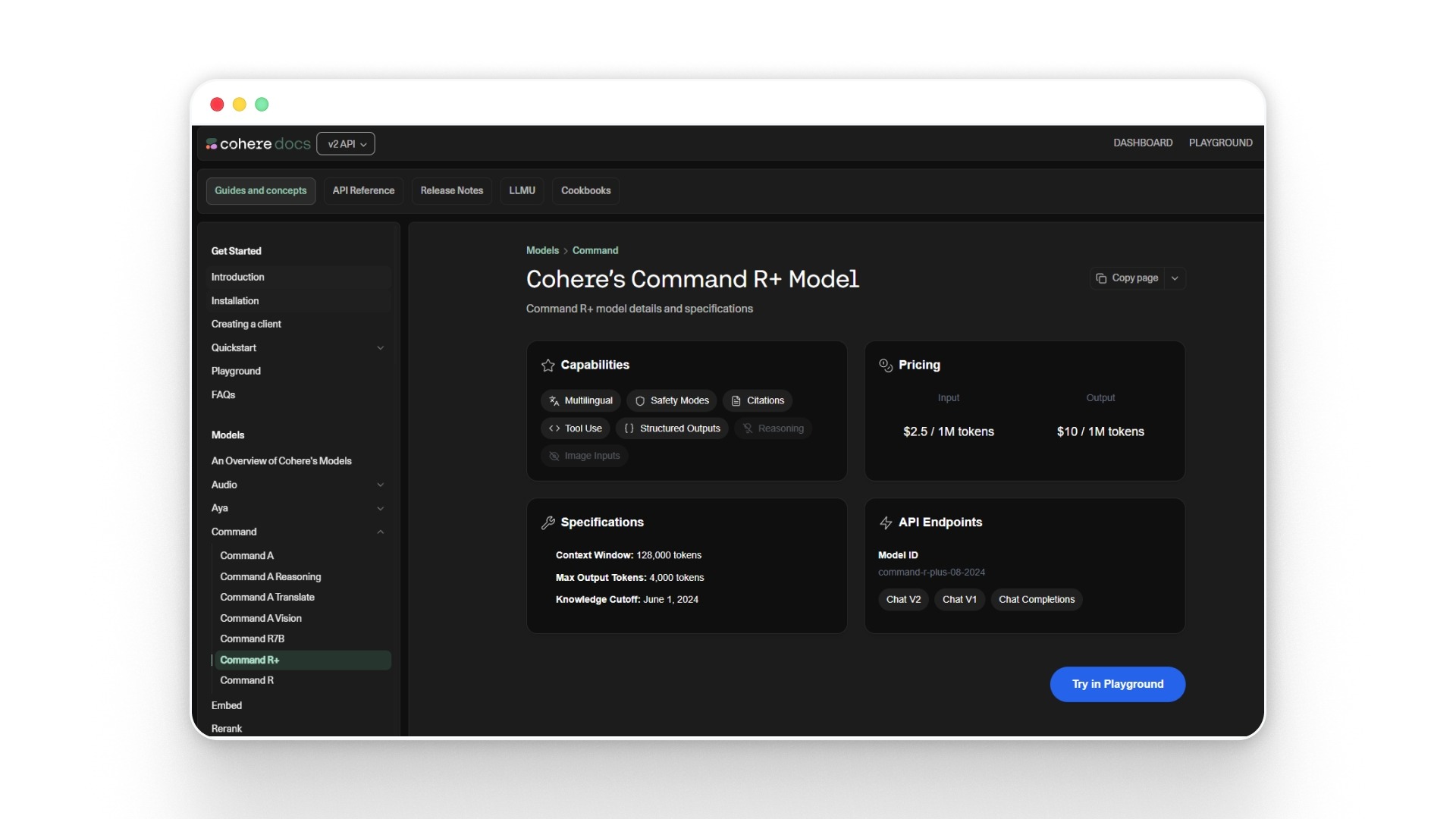

7. Command A by Cohere

Command A is Cohere’s newer enterprise-focused model for language tasks. It replaces Command R+ as the recommended default for most business use cases.

Command R+ remains relevant for teams that need complex retrieval-augmented generation (RAG) and multi-step tool workflows.

Key Features:

- Enterprise-ready language performance: Supports tasks such as summarization, knowledge retrieval, internal search, and structured output generation.

- Strong fit for retrieval workflows: Works well with Cohere’s broader retrieval tools, including Embed and Rerank, making it useful for knowledge-heavy applications.

- Recommended default model: Cohere positions Command A as the better general choice for most use cases, while Command R+ remains suitable for more specialized retrieval-driven or tool-heavy workflows.

This could spark your interest: Advancements in Conversational AI: A Deep Dive into Audio Language Models

These models differ by deployment control, reasoning depth, multimodal support, enterprise workflow fit, and cost efficiency.

Open-weight models like Llama 4 Scout, Llama 4 Maverick, Mistral Large 3, and DeepSeek-V4 Preview offer greater deployment and infrastructure flexibility. On the other hand, GPT-5.5, Claude Opus 4.7, Gemini 3.1 Pro, and Command A support specialized reasoning, tool use, and enterprise workflow needs.

If you're curious about how these models stack up against each other, let's take a quick tour through the popular types of LLMs out there today and see what makes each of them tick.

What Are the Major LLM Model Families Available Today?

Major AI providers now offer large language model families designed for reasoning, dialogue, coding, multimodal work, retrieval, and enterprise deployment.

These models are usually grouped by provider ecosystem, with each family offering different strengths in performance, context handling, integrations, and deployment control.

Below is a breakdown of key LLM model families used across enterprise and production environments.

1. OpenAI LLM Models

OpenAI’s GPT models are designed for reasoning, coding, multimodal tasks, tool use, and professional workflows. Its current lineup includes advanced models for complex work and smaller variants for lower-latency, cost-efficient execution.

OpenAI describes GPT-5.5 as built for complex work such as coding, research, information analysis, document and spreadsheet creation, and tool use.

2. Anthropic LLM Models

Anthropic’s Claude models focus on reasoning, coding, long-context work, vision, and enterprise-grade reliability. Claude Opus 4.7 is Anthropic’s most capable generally available model for complex tasks, while Sonnet and Haiku cover balanced and faster workloads.

3. Google LLM Models (Gemini)

Google’s Gemini models are multimodal systems designed for reasoning, software engineering, agentic workflows, and integration with the Google ecosystem.

Gemini 3.1 Pro is best for complex tasks that require advanced reasoning across modalities, while Gemini 3 Flash focuses on speed and efficiency.

4. xAI LLM Types (Grok)

xAI’s Grok models are designed for reasoning, tool use, real-time search integration, and agentic workflows. Grok 4.20 is the newer flagship model, while Grok 4.20 Multi-agent is optimized for multi-agent research workflows.

5. Other Notable LLM Families

Some models are widely used outside closed model ecosystems, especially where teams need open-weight deployment, cost control, retrieval, or enterprise customization.

Now that we’ve covered the major LLM model families and how they differ, the next section focuses on practical recommendations based on real-world use cases.

Which LLM Should You Choose Based on Your Use Case?

This section highlights the most suitable LLMs for key application scenarios in 2026. Each recommendation is based on model capabilities, including reasoning, multimodal support, deployment flexibility, and enterprise readiness.

Below is a structured view of how leading LLMs align with different use cases:

Building on the strengths and capabilities of today’s most advanced LLMs, NuPlay by Nurix AI brings these innovations directly into your business environment with enterprise-grade orchestration and workflow automation.

How NuPlay by Nurix AI Helps You Apply Advanced LLMs in Real Workflows?

NuPlay by Nurix AI is an enterprise-grade voice and chat AI platform that automates sales, support, and knowledge workflows through orchestrated AI agents.

Enterprises often struggle to move from evaluating LLMs to actually deploying them in production. Integrating models into existing systems, maintaining response quality, and managing workflows at scale can quickly become complex.

NuPlay by Nurix AI addresses this by orchestrating AI agents across systems, allowing businesses to apply LLM capabilities directly to real-world operations such as customer support, sales conversations, and document processing.

Key Features of NuPlay by Nurix AI:

- Voice AI with Low Latency: Supports real-time, voice-to-voice interactions across multiple languages, enabling faster response times in customer-facing workflows.

- Custom AI Agents for Specific Workflows: AI agents are configured for use cases like lead qualification, support automation, and document handling, improving efficiency and reducing manual intervention.

- Deep System Integrations: Connects with CRM platforms, support tools, and internal systems, ensuring LLM outputs are aligned with real-time business data.

- Enterprise-Grade Security and Compliance: Supports secure deployments with controls for data access, logging, and compliance requirements across regulated environments.

- Scalable Deployment Architecture: Designed to handle high-volume interactions while maintaining consistent performance across multiple workflows.

- Multi-Modal Interaction Support: Enables both voice and text-based interactions, supporting use cases that require flexibility across channels.

As you evaluate which LLM is the most advanced today, the next step is understanding how these models perform in real-world conditions and what to expect as they continue to evolve.

You’ll be glad you clicked this: Where Will I See Agentic AI?

What Will the Next Generation of LLMs Look Like?

Large language models are expanding beyond static text systems to become real-time, multi-modal, and highly specialized platforms. The next generation of LLMs will build on today’s progress in multimodality, retrieval, tool use, and agent workflows, with a stronger focus on accuracy, efficiency, and enterprise control.

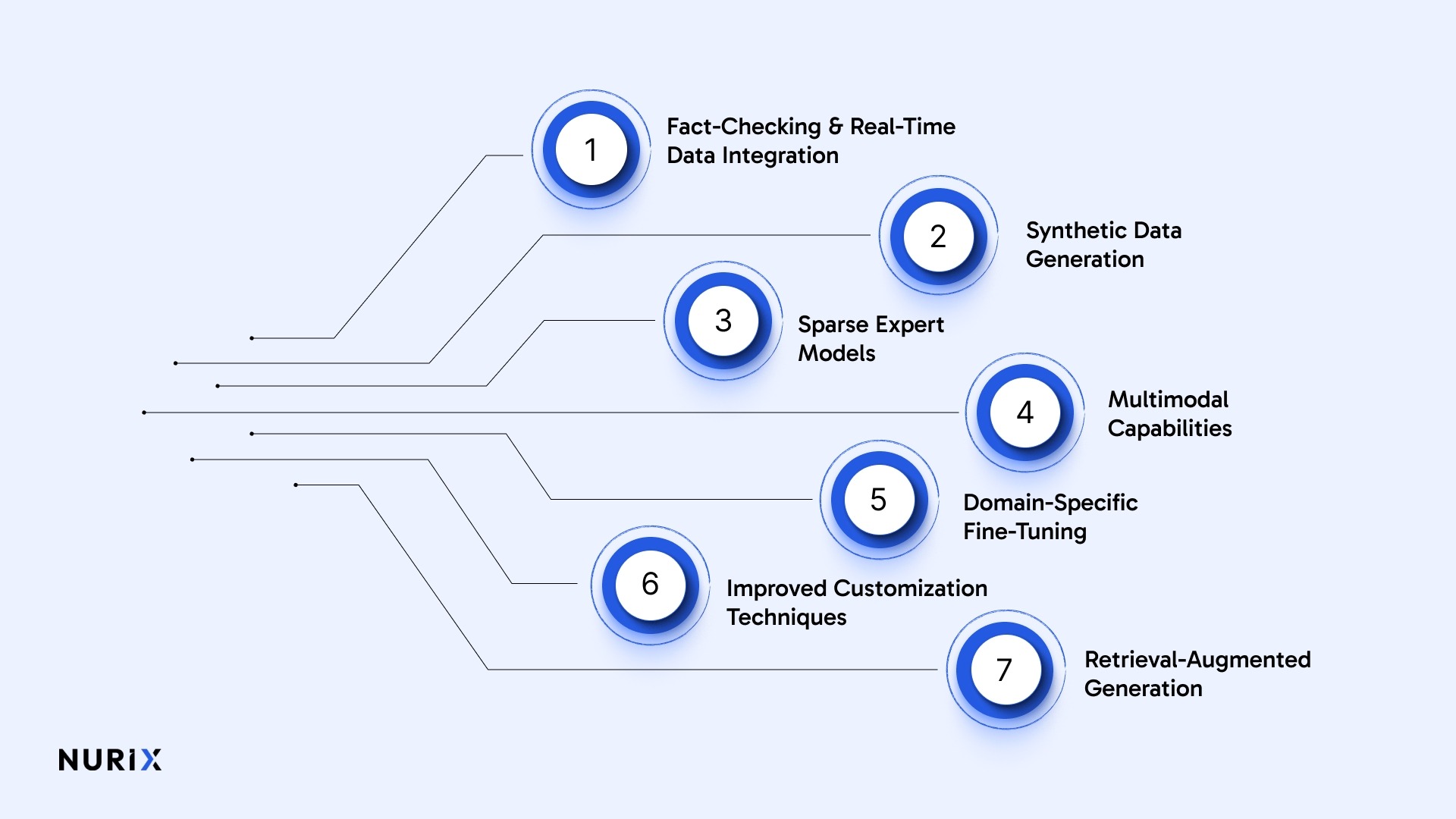

Here are the key developments shaping the future of LLMs:

- Fact-Checking and Real-Time Data Integration: Next-gen LLMs will reference live external databases for instant fact-checking, providing up-to-date, verifiable answers and overcoming the limitations of static training knowledge bases.

- Synthetic Data Generation: Researchers are working on LLMs that generate their own synthetic training data. This will speed up training, reduce reliance on curated data, and make models more context-aware.

- Sparse Expert Models: Sparse expert architectures activate only the relevant neural regions per task, dramatically improving computational efficiency and enabling advanced AI capabilities in resource-restricted deployments.

- Multimodal Capabilities: Upcoming LLMs will process not just text and images, but also audio and video inputs, enabling richer multi-format interactions and broader AI-powered applications.

- Domain-Specific Fine-Tuning: Ongoing advances in fine-tuning will enable highly specialized LLMs optimized for precise workflows across sectors such as healthcare, finance, and legal advisory services.

- Improved Customization Techniques: Improved fine-tuning methods, including reinforcement learning from human feedback (RLHF), will make model responses more aligned with user intent and more accurate and relevant. This customization will continue to affect which LLM is the most advanced for custom applications today.

- Retrieval-Augmented Generation (RAG): Combining generative AI with real-time data retrieval, RAG-equipped LLMs will deliver highly contextual, accurate responses to industries that demand precise, up-to-date information.

As LLMs continue to grow, the definition of the “most advanced” model will keep shifting based on real-world performance and adaptability. The real advantage will come from how effectively these models are applied within business workflows.

Conclusion

The most advanced LLM in 2026 depends on how you define performance. GPT-5.5, Claude Opus 4.7, Gemini 3.1 Pro, DeepSeek-V4 Preview, Llama 4 Scout and Maverick, Mistral Large 3, and Command A each lead in different areas, from reasoning and multimodal workflows to open deployment and enterprise retrieval.

The right choice comes down to your use case, integration needs, data control, cost, and how the model performs in real workflows.

NuPlay by Nurix AI is an enterprise-grade voice and chat AI platform that helps teams apply these LLMs through orchestrated AI agents. It connects models to business systems and supports workflow automation across sales, support, and operations, providing stronger visibility and control.

Get in touch with us to reduce response times, automate workflows, and see how NuPlay by Nurix AI drives real operational results.

Author: Sakshi Batavia — Marketing Manager

Sakshi Batavia is a marketing manager focused on AI and automation. She writes about conversational AI, voice agents, and enterprise technologies that help businesses improve customer engagement and operational efficiency.

.jpg)

.jpg)